AI Accuracy Indicator

Know how reliable the AI systems really are with measurable trust indicators.

Transparent Explainability

Understand why AI systems make decisions so outcomes are clear, defensible

Humans in the Loop

Maintain human oversight to ensure AI decisions are responsible and accountable

Security & Guardrails

Coming SoonWhy Businesses Don't Trust AI

As AI becomes a core part of cyber risk management, trust is non-negotiable

Lack of a standardized framework to determine if it's safe and trustworthy.

No measurable way to enforce governance, manage risk, or scale.

Poor transparency makes outcomes difficult to explain or audit.

Static observations fail to accurately detect unpredictable behavior.

Difficult to benchmark or compare models, vendors, and use cases.

Introducing: SAFE AURA

(AI Usage Reliability & Assurance)

A Production-Grade Framework to Evaluate, Govern, and Enhance AI Systems

Standardized AI Trust Framework

Introduces a structured way to measure whether AI systems are safe, reliable, and enterprise-ready.

Measurable AI Accuracy

Measurable AI indicators on diverse parameters enabling business leaders to optimize, improve & communicate trust

Continuous AI Observability

Provides real-time visibility to monitor AI behavior and how models perform across real-world scenarios.

Dimensions of the SAFE AURA

Trust Indicators

Accuracy

Measures how consistently AI delivers correct and reliable results

Explainability

Makes AI decisions clear, understandable, and easy to justify.

Security

Prevents harmful or non-compliant outcomes by following strict guardrails.

Reliability

Ensures consistent performance across scenarios, environments, and over time

Human Control

Maintains human oversight to validate and intervene when needed

How does it work?

The Difference Is Architectural Not Incremental

| Before SAFE AURA | With SAFE AURA | |

|---|---|---|

| Accuracy | Teams hope AI outputs are correct | Teams know when AI can be trusted |

| Scaling | Manual verification slows scaling | Scales safely across business workflows |

| Security | Problems surface after AI fails | Risk signals are identified proactively |

| Explainability | Leaders hesitate to rely on AI-driven outcomes | Decisions are clear, defensible, and explainable |

| Human Control | Heavy manual oversight slows decisions | Humans stay in control without slowing AI |

The Enterprises That Act Today Will Set the Standard for the Future

Give us 30 minutes and we'll show you why our customers trust us.

Take SAFE for a Test Drive

TEST DRIVE SAFEFrequently Asked Questions

-

How does SAFE AURA evaluate AI systems?

-

SAFE AURA evaluates AI systems across seven key dimensions of trust, including accuracy, explainability, safety, reliability, latency, human oversight, and business impact. These signals are continuously measured and aggregated into the AI Trust Score. For more information: SAFE’s AI Policy

-

Can SAFE AURA monitor AI systems in production?

-

Yes. It supports continuous monitoring of AI systems both before and after deployment. This enables organizations to detect drift, degradation, and unexpected behavior as models operate in real-world environments. For more information: SAFE’s AI Policy

-

How does SAFE AURA help with AI governance?

-

It provides measurable signals that support AI governance and accountability. By translating AI behavior into structured metrics and trust scores, organizations can enforce policies, audit AI performance, and maintain oversight. For more information: SAFE’s AI Policy

-

Who should use the SAFE AURA framework?

-

The SAFE AURA framework is designed for organizations deploying AI systems across business operations. It helps security teams, risk leaders, AI engineers, and executives evaluate AI trust and manage AI-related risk.

-

How does AI work in SAFE products?

-

SAFE uses a combination of open-source, self-hosted, and third-party hosted models for generative AI within the platform (e.g., processing uploaded information, agentic workflow optimization, insight generation). Models are chosen with dynamic routing, and neither SAFE nor its providers train models on customer data. Prompts may be stored for user history. For more information: SAFE’s AI Policy

-

Does SAFE train AI models on customer data?

-

No. SAFE does not use any customer inputs, outputs, or tenant data to train or improve models or services. All processing of data is only to generate the requested outputs. For more information: SAFE’s AI Policy

Latest from SAFE

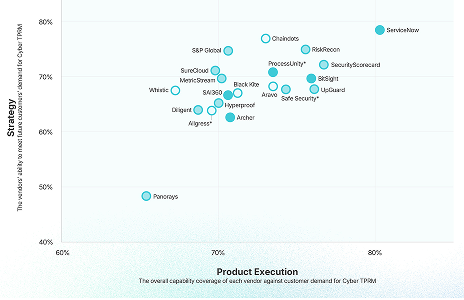

Liminal Names SAFE a Leader in the 2025 Link IndexTM Report

SAFE TPRM recognized as the #1 solution in product capability by Liminal for its 100% automated, AI-driven approach.

View Analyst Report

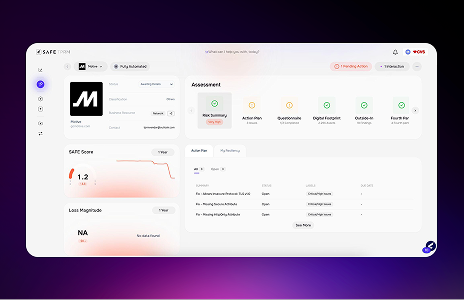

Transform Third-Party Risk Management with SAFE TPRM

Discover how SAFE TPRM enables 100% autonomous TPRM with agentic workflows to streamline the entire third-party lifecycle.

View Datasheet

Top 5 Third-Party Risk Management Challenges Every Practitioner Faces

Uncover the hidden costs of outdated TPRM workflows—and see how leading teams are automating [...]

View Whitepaper